From News Articles to Knowledge Graphs with spaCy and NetworkX

Building a complete NLP pipeline to extract entities, discover relationships, and visualize knowledge networks from unstructured text

News articles encode relationships in plain language all the time… “Apple acquired Beats Electronics”, “President Obama visited France”, “Google announced a partnership with Samsung”.

Each sentence is a triple in disguise: two entities and a verb tying them together. If we extract enough of them across enough articles, we get a knowledge graph: a structure we can query, visualize, and reason over.

This post walks through that pipeline end-to-end. We start from raw news text, run named entity recognition with spaCy, infer relationships, and analyze the resulting graph.

As always, you can find the companion notebook on the Graphs for Data Science GitHub repository:

We use the AG News corpus, 120,000 articles across four categories (World, Sports, Business, Sci/Tech). For the sake of expediency, we sample 2,000 articles, balanced 500 per category. Loading is a single call to Hugging Face:

from datasets import load_dataset

dataset = load_dataset('ag_news', split='train')

df = dataset.to_pandas()

df.columns = ['text', 'label']

df['category'] = df['label'].map({0: 'World', 1: 'Sports',

2: 'Business', 3: 'Sci/Tech'})

df = df.groupby('category').sample(500, random_state=42)Two thousand articles are enough that real co-occurrences rise above noise, but small enough that the whole pipeline runs in a few minutes. A light cleaning pass to strip HTML, collapse whitespace, etc., is all the preprocessing the parser needs.

Named Entity Recognition

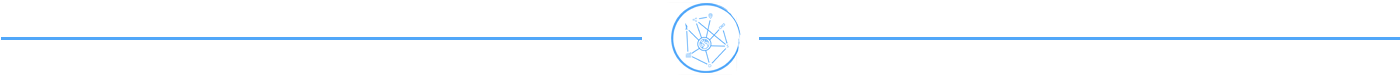

The first step in any knowledge graph pipeline is pulling entities out of the text. We use spaCy’s `en_core_web_lg` model and keep five entity types relevant to news: PERSON, ORG, GPE (countries, cities, states), EVENT, and NORP (nationalities, religious or political groups).

import spacy

nlp = spacy.load('en_core_web_lg')

ENTITY_TYPES = {'PERSON', 'ORG', 'GPE', 'EVENT', 'NORP'}

rows = []

for art_idx, doc in enumerate(nlp.pipe(df['text_clean'], batch_size=100)):

for sent in doc.sents:

for ent in sent.ents:

if ent.label_ in ENTITY_TYPES:

rows.append({'article_idx': art_idx,

'sentence': sent.text,

'entity': ent.text,

'label': ent.label_})On my MBP laptop, these 4,000 articles parse in less than five minutes. One problem becomes immediately apparent when NER finishes: The same entity can be referred to in different ways. For example, ”U.S.”, ”US”, ”United States”, and ”the United States” all refer to the same entity, but spaCy returns them as four distinct strings. If we want to prevent the graph from fragmenting into duplicate copies of every common entity, we must merge these variations into a single entity.

We implement this with a few simple hand-curated mappings (see notebook for the full list):

ABBREV_MAP = {'U.S.': 'United States', 'US': 'United States',

'UK': 'United Kingdom', 'EU': 'European Union'}

def normalize_entity(text):

text = text.strip()

for prefix in ('the ', 'The ', 'a ', 'A '):

if text.startswith(prefix):

text = text[len(prefix):]

break

text = re.sub(r"'s$", '', text)

return ABBREV_MAP.get(text, text)On AG News, this reduces duplicate node forms by 40-60%, significantly improving the quality of our graph. For a production system, you’d want to perform entity linking against Wikidata or DBpedia. Still, for a simple exploratory pipeline, this gets you most of the way at a fraction of the cost.

Our sample has a wide range of entities covering a wealth of different topics:

Relation Extraction

Entities alone are just a list. The edges are where the graph becomes interesting, and there are two complementary ways to find them.

The first is co-occurrence. If two entities appear in the same sentence, treat them as related and accumulate a count across sentences:

from itertools import combinations

from collections import Counter

pair_counter = Counter()

for (_, _), group in ent_df.groupby(['article_idx', 'sentence_idx']):

entities = sorted(set(group['entity_norm']))

for e1, e2 in combinations(entities, 2):

pair_counter[(e1, e2)] += 1Co-occurrence is high-recall and noisy. Two entities mentioned in one sentence aren’t necessarily related. Still, the noise corrects itself at scale: real relationships co-occur repeatedly across articles, while spurious pairings have lower weights and get pruned later. The downside is that the edges are unlabeled: we know two entities are related, but not how.

For labels, we need syntax. The trick is to walk up spaCy’s dependency tree from each entity to the lowest common ancestor, and if that ancestor is a verb, that verb is the relation:

def get_relation_verb(ent1_span, ent2_span):

e1_ancestors = set()

tok = ent1_span.root

while tok.head is not tok:

e1_ancestors.add(tok.head); tok = tok.head

tok = ent2_span.root

while tok.head is not tok:

if tok.head in e1_ancestors and tok.head.pos_ == 'VERB':

return tok.head.lemma_

tok = tok.head

return NoneThis produces labeled triples like (Apple, acquire, Beats), (Biden, visit, France), and (Google, announce, Samsung). Dependency parsing is precise when it works, but still limited. Passages using the passive voice, complex clauses with multiple verbs, and conjunctions all defeat it. In practice, the right move is to use both: co-occurrence for edge weights and coverage, dependency parsing as a labeling layer that fills in verbs wherever it can.

Building the Graph and Pruning It Down

NetworkX assembly is mechanical once you have entities and edges. Each node carries its dominant entity type and a mention count; each edge carries a co-occurrence weight and an optional verb label. The interesting work is what comes next.

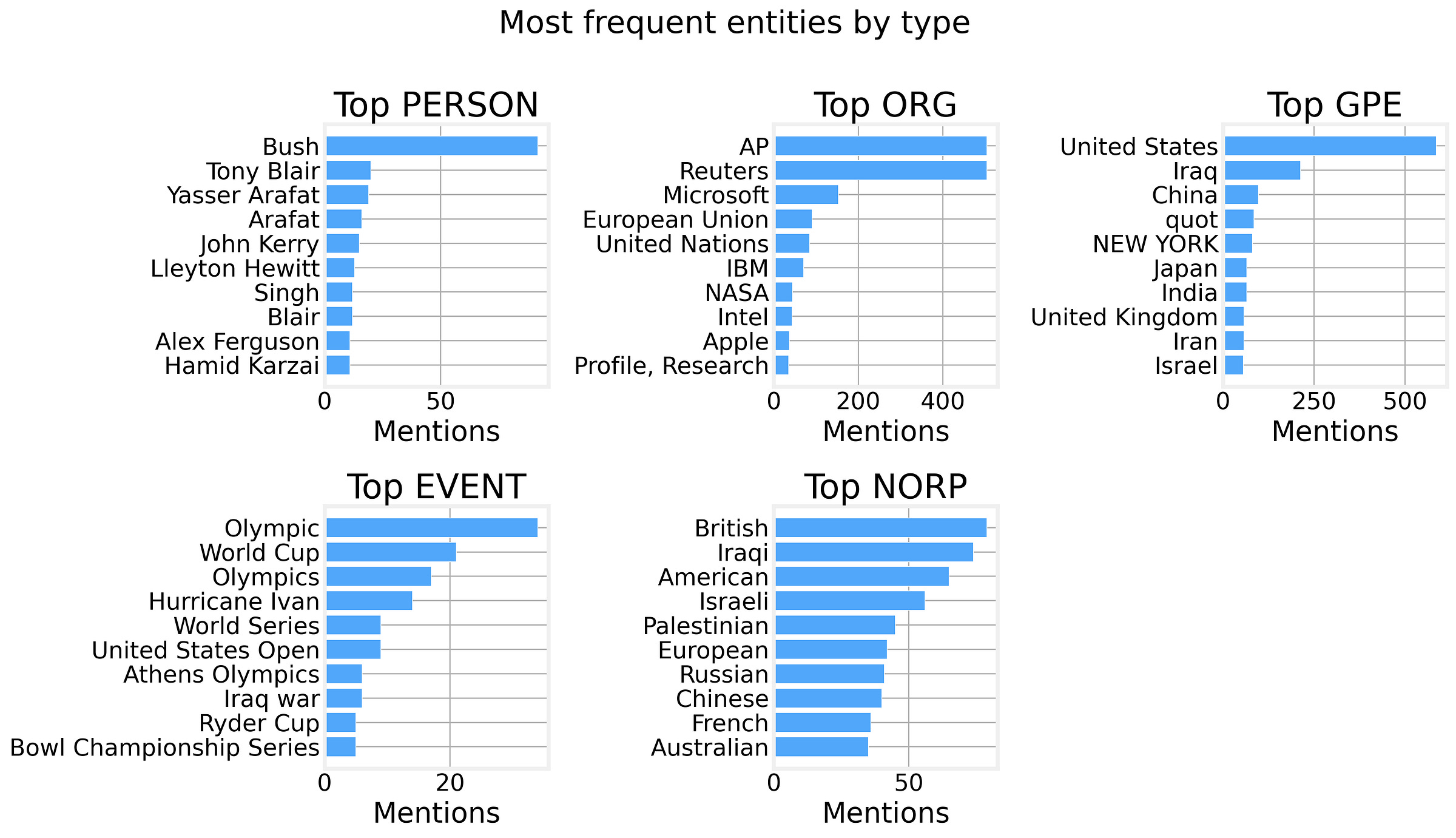

The raw graph is a messy hairball with thousands of nodes, most appearing once, and most edges are sentence-level coincidences.

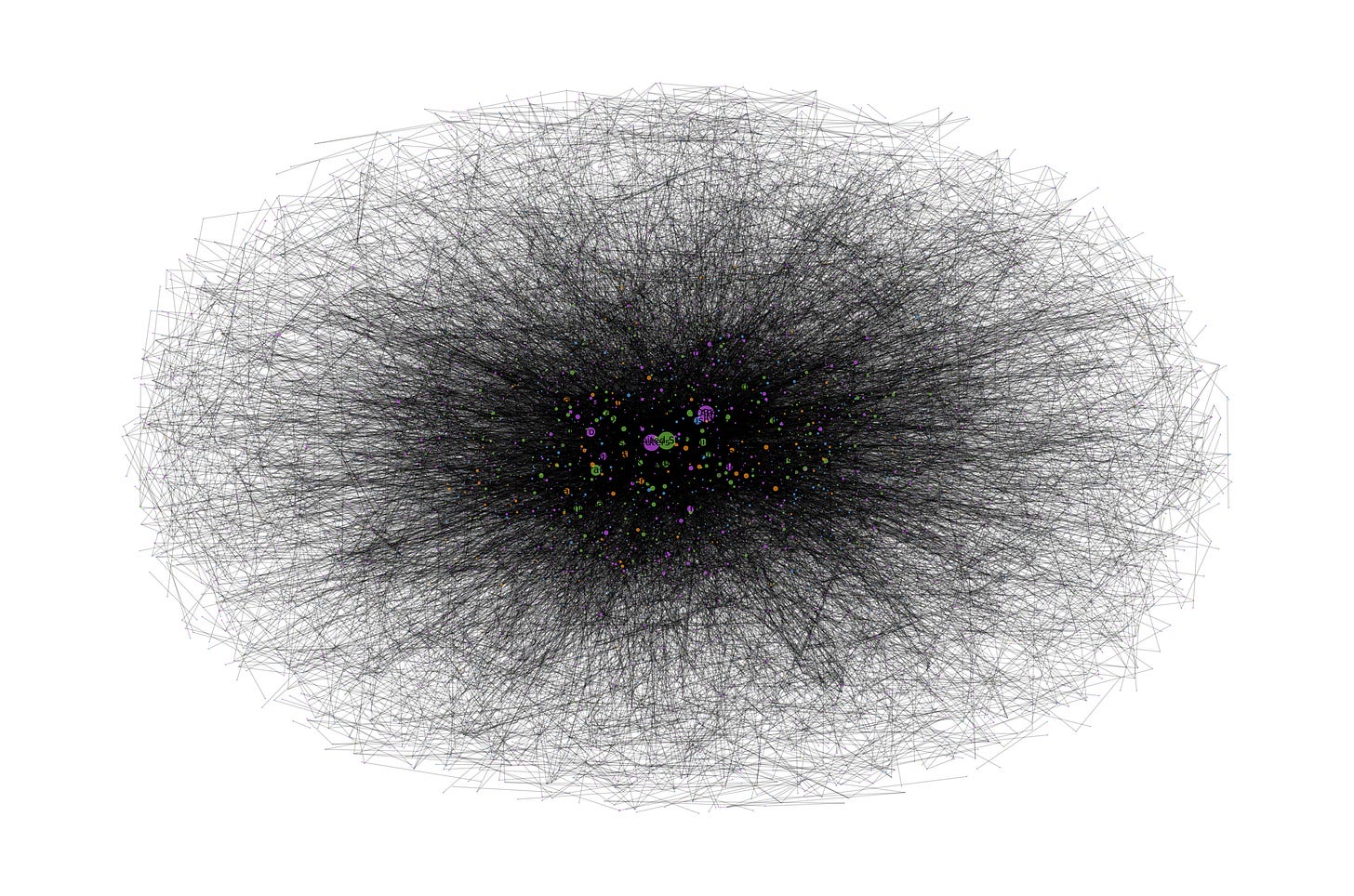

We drop edges with weight below 2, then drop nodes with degree below 2. In our data sample, this removes 70-80% of the structure, and what remains are entities that meaningfully co-occur and are connected by relationships that are consistent across multiple articles. The result is much cleaner:

Analysis

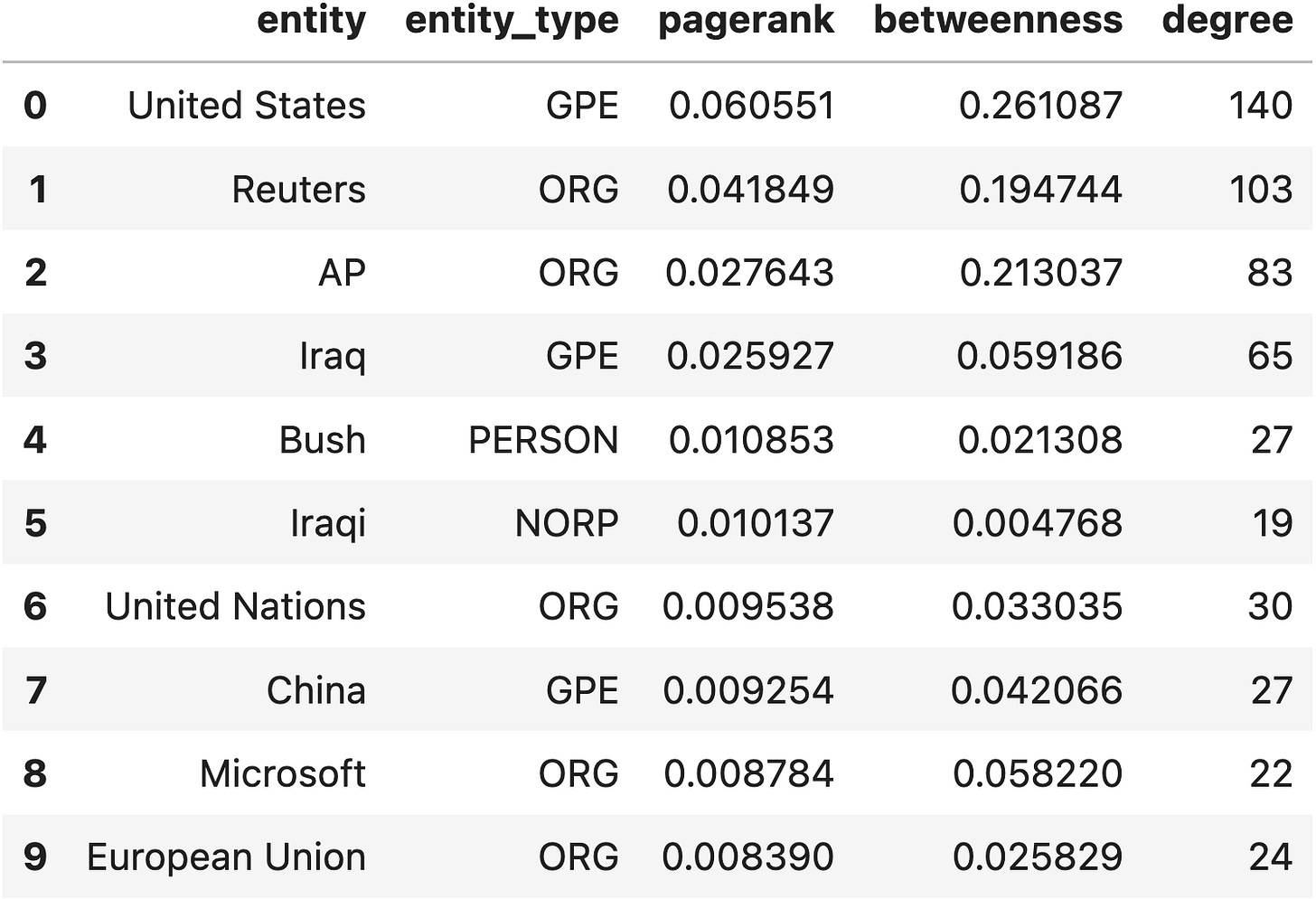

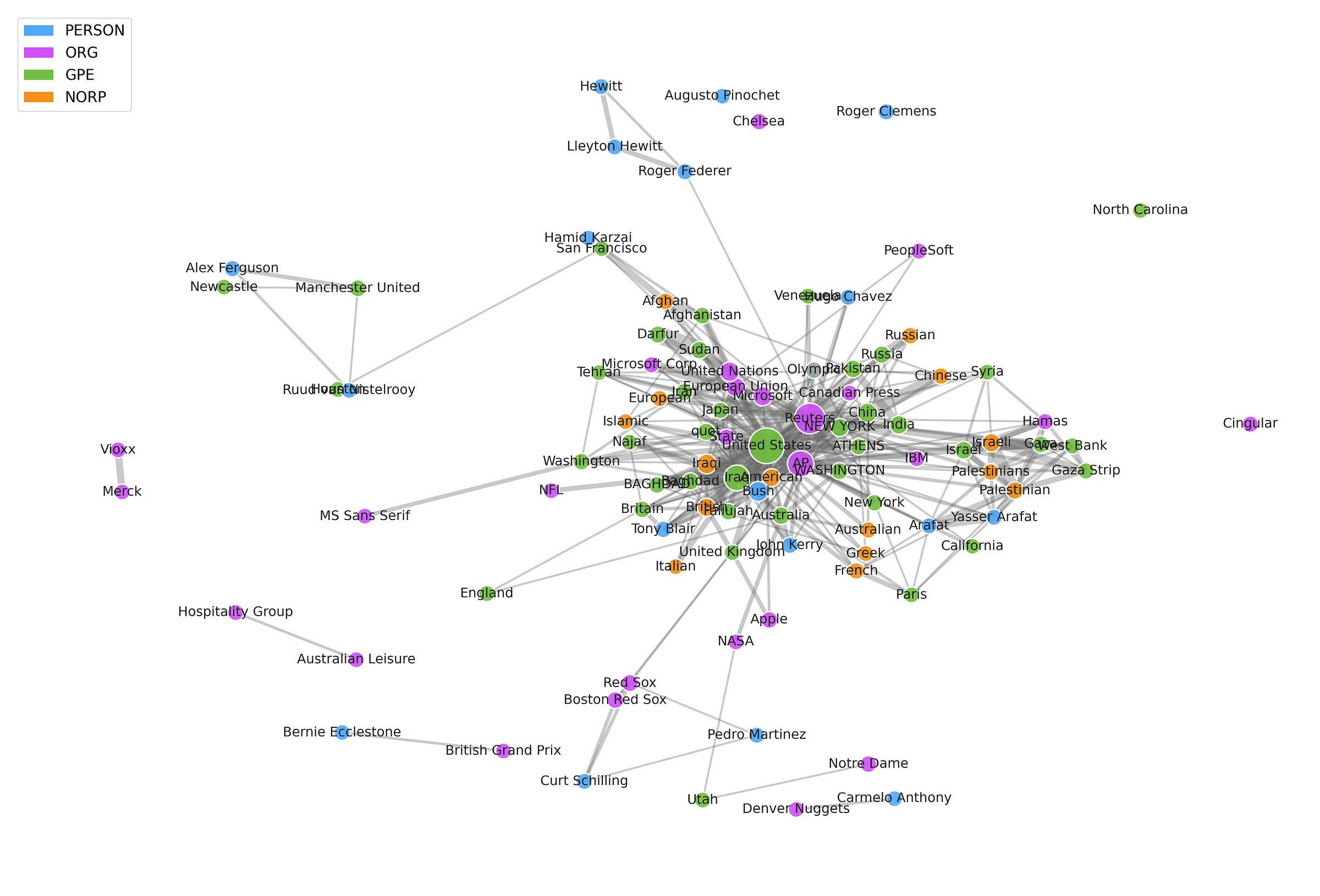

Once the graph is clean, classical network analysis answers most of the interesting questions. PageRank ranks entities by structural prominence (an entity is important if it’s connected to many other important entities) and surfaces the heads of state, large corporations, and geopolitical hot spots that sit at the center of news coverage.

If we now size the nodes by their PageRank, and restrict our graph to just the Top 100 nodes by PageRank, we obtain a nice visual representation of the contents of the news knowledge graph:

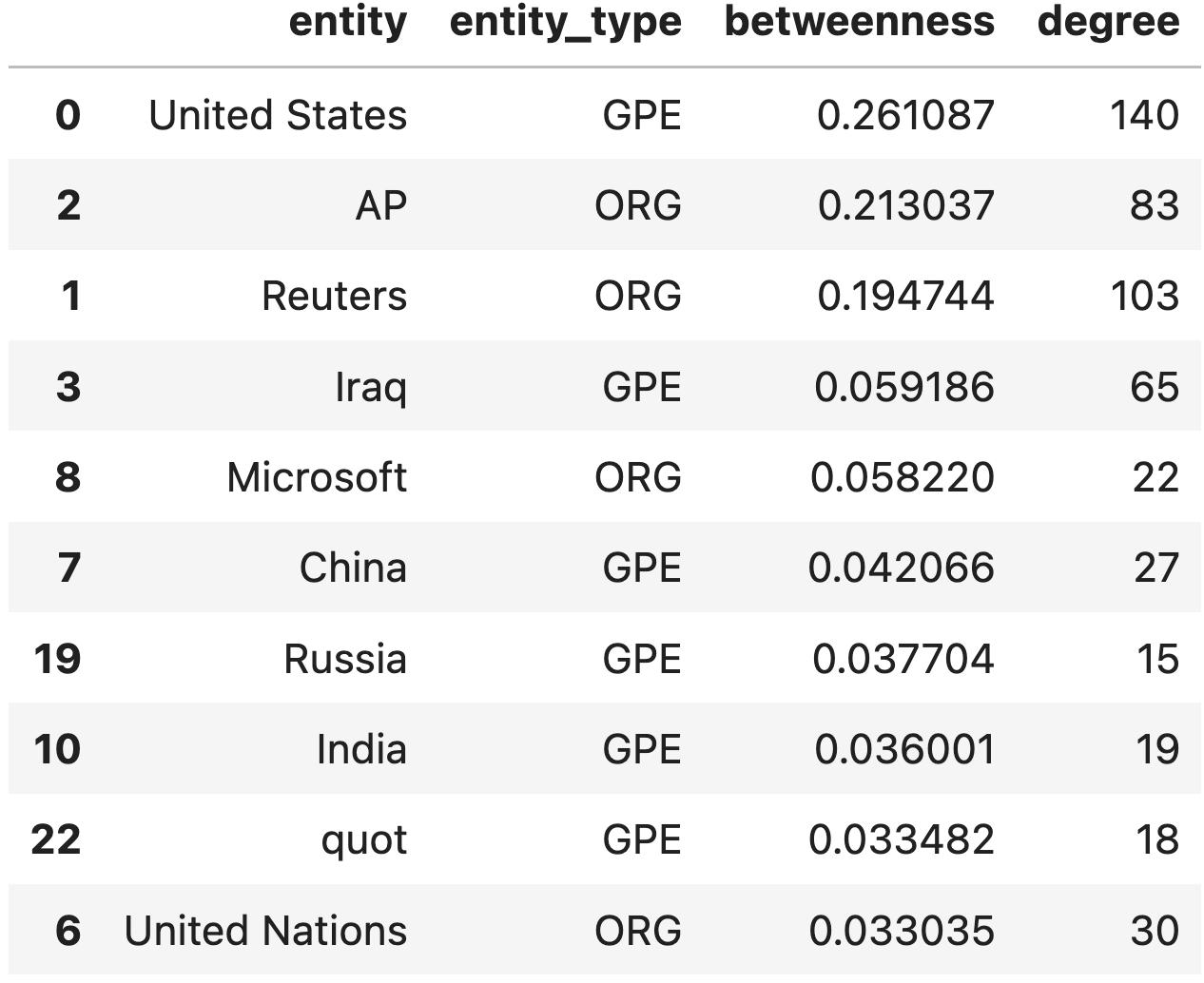

Betweenness centrality, on the other hand, finds bridges between clusters, entities that show up in multiple otherwise-separate stories. A pharmaceutical company that appears in both Business and Sci/Tech, or a country that turns up in both World and Sports, will have a lower PageRank but a high betweenness. That’s how we can identify cross-domain stories.

Community detection adds one extra layer of nuance to our analysis. News naturally clusters around storylines: the US presidential election, a European football transfer window, a tech acquisition wave. The Louvain algorithm for community detection finds these clusters by maximizing modularity, a measure of how strongly connected a community is internally relative to its external connections.

The communities we identify map cleanly to recognizable real-world stories, even though Louvain knows nothing about news categories. The graph structure alone is enough!

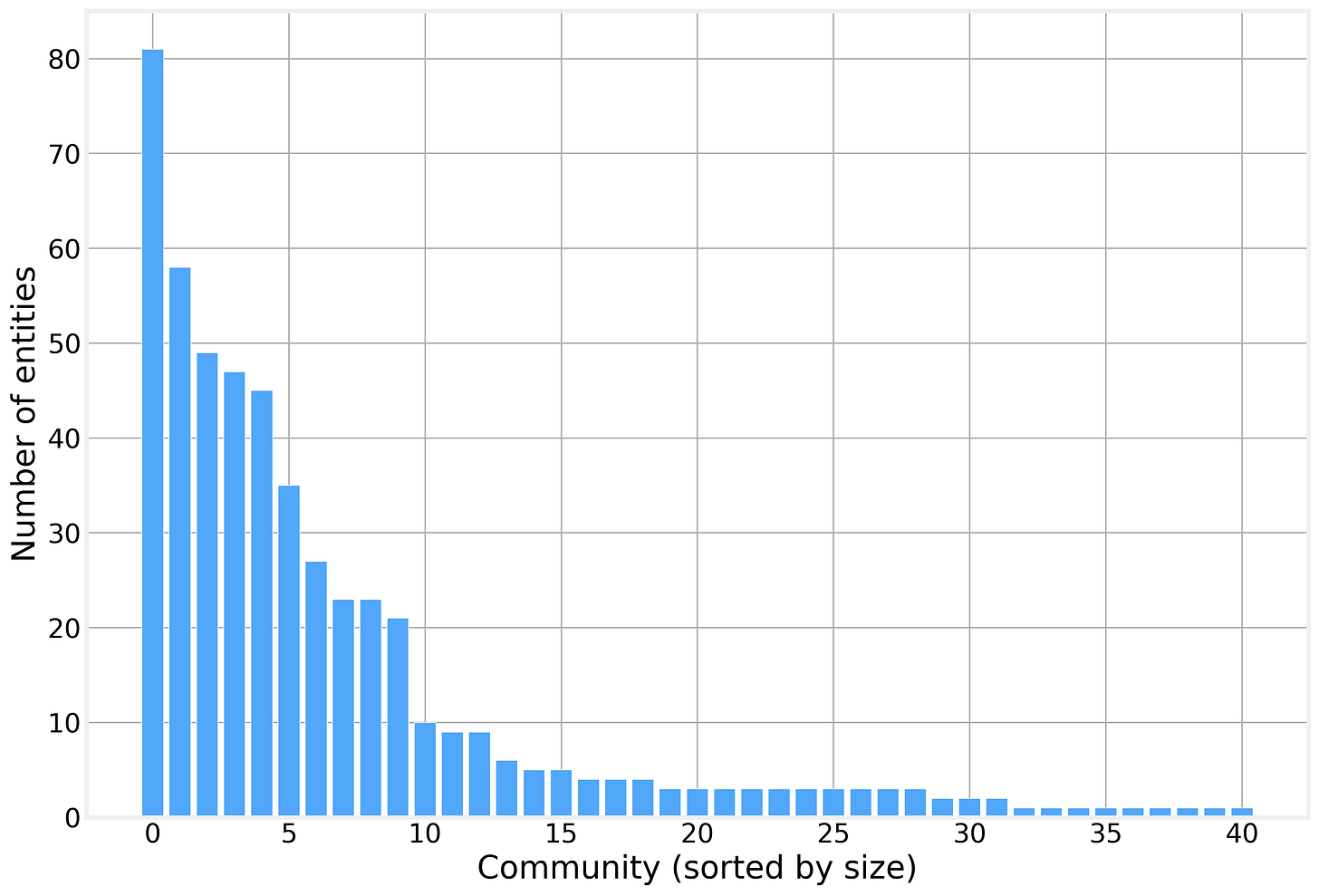

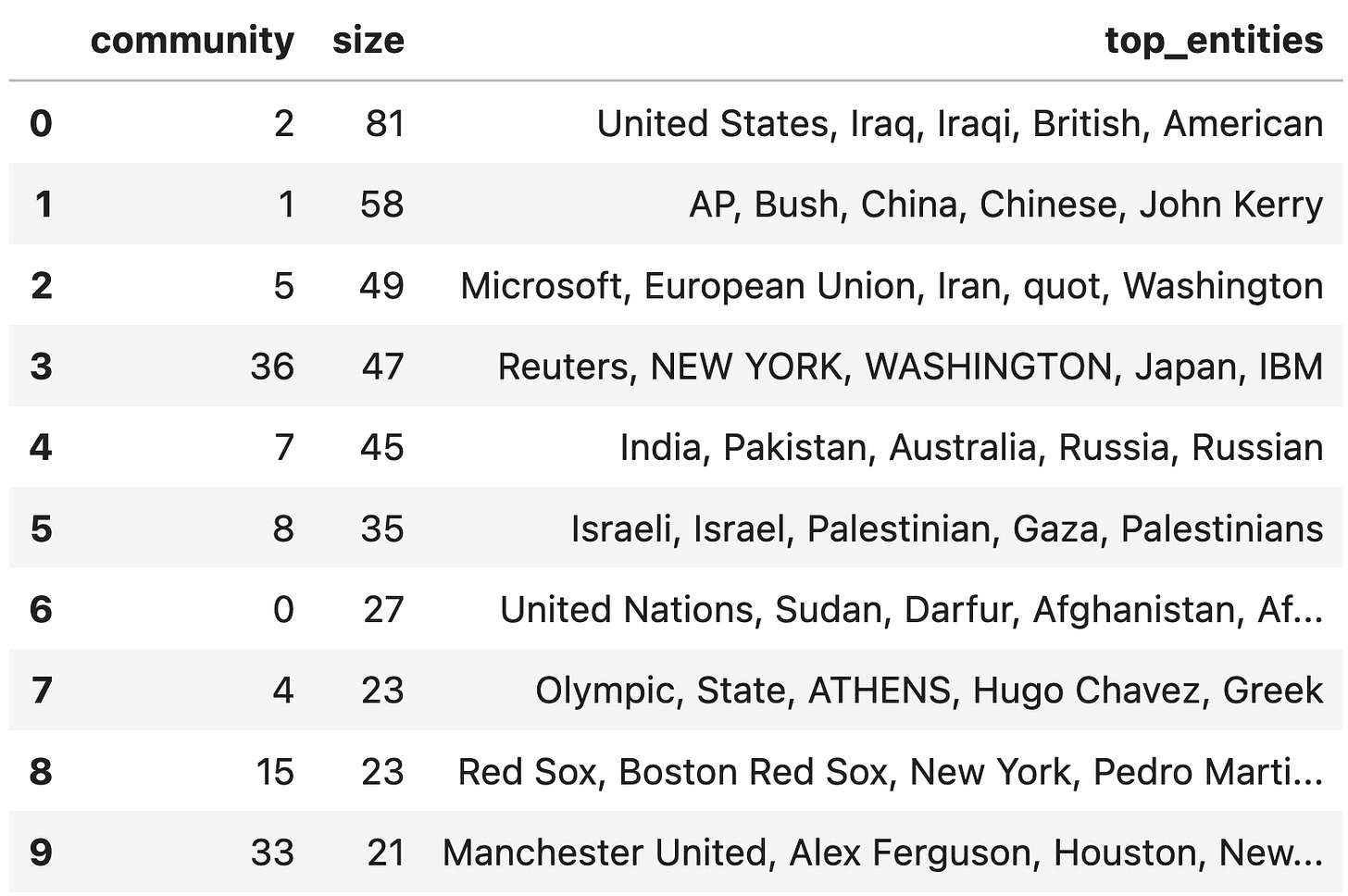

In total, we identify 41 communities of varying sizes:

And a quick look at the nodes that constitute each community makes it clear that they are related, validating the knowledge we included in our knowledge graph:

We hope you enjoyed this Graphs post on the Data for Science Substack and look forward to hearing your thoughts. We hope you

You can find all the code for the analysis in this post in our companion GitHub Repository https://github.com/DataForScience/Graphs4Sci

And, of course, don’t forget to

this post with others who might be interested, and encourage them to

so that they have access to the entire backlog of posts and be the first to know when a new a new article is posted.

Love this tutorial! Perfect application of NLP and network analysis. I will try this on my Obsidian vault. Thanks for sharing your approach and code. Eye opening to see such and elegant real world data pipeline using off the shelf Python packages so deftly. No need for fancy stochastic brittle LLM--all you need is fundamental network science.